This mind-reading technology can decode your brain accurately

New York– Researchers have developed a novel mind-reading technology that uses artificial intelligence to decode what the human brain is seeing, an advance that could lead to new insights into brain function.

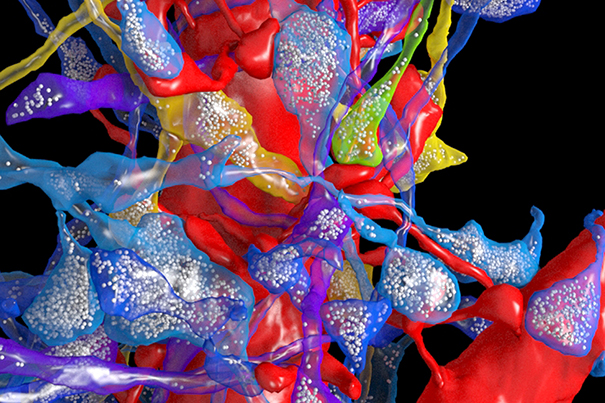

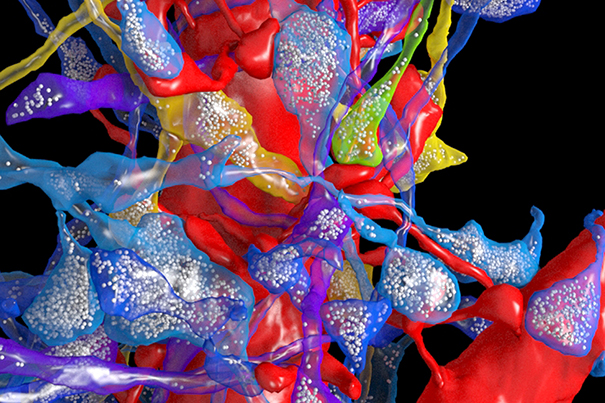

Using the “convolutional neural networks” — a form of “deep-learning” algorithm — instrumental in facial recognition technology in computers and smartphones, the researchers found how the brain processed static images.

The algorithm was for the first time used to understand brain processes while a person watches natural scenes, a step toward decoding the brain while people are trying to make sense of complex and dynamic visual surroundings, said lead author Haiguang Wen, doctoral student at the Purdue University in Indiana, US.

For the study, appearing in the journal Cerebral Cortex, the team acquired 11.5 hours of Functional Magnetic Resonance Imaging (fMRI) data from each of three women subjects watching 972 video clips, including those showing people or animals in action and nature scenes.

Using the convolutional neural network model, the team was able to accurately decode the fMRI data of the activity in the brain’s visual cortex while watching the videos.

“I think what is a unique aspect of this work is that we are doing the decoding nearly in real time, as the subjects are watching the video. We scan the brain every two seconds, and the model rebuilds the visual experience as it occurs,” Wen said.

The finding is important because it demonstrates the potential for broad applications of such models to study brain function, even for people with visual deficits.

“We think we are entering a new era of machine intelligence and neuroscience where research is focusing on the intersection of these two important fields,” explained Zhongming Liu, Assistant Professor at the varsity.(IANS)